Physical interactions using the body play an important role in human-human interactions by allowing the exchange of subtle information that is difficult to describe in words, e.g. comfort, preference, intention, and emotion. Robots working closely with humans have to understand, utilize and express such information in order to effectively interact with humans. The goal of empathetic physical interaction is to realize human-centered physical support where the robot uses its body as a medium for exchanging subtle information with the human partner, and adapts its behavior based on the information to improve the human perception and, ultimately, overall performance of physical support. This talk will introduce two related ongoing projects at Honda Research Institute USA: perception of pedestrian avoidance behavior of a mobile robot, and modeling of intimate social interactions of a humanoid robot.

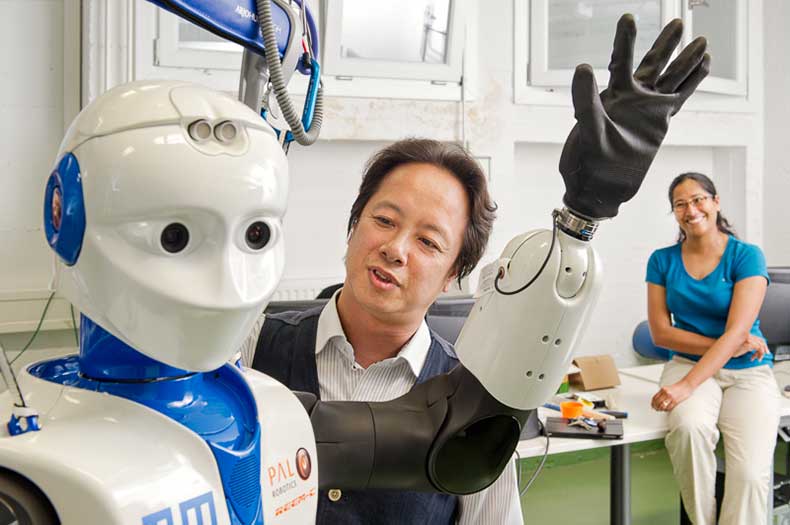

Gordon Cheng

Gordon Cheng holds the Chair of Cognitive Systems with regular teaching activities and lectures. He is Founder and Director of Institute for Cognitive Systems, Faculty of Electrical and Computer Engineering at Technical University of Munich, Munich/Germany. He is also the coordinator of the CoC for Neuro-Engineering - Center of Competence Neuro-Engineering in the Department of Electrical and Computer Engineering.

Formerly, he was the Head of the Department of Humanoid Robotics and Computational Neuroscience, ATR Computational Neuroscience Laboratories, Kyoto, Japan. He was the Group Leader for the newly initiated JST International Cooperative Research Project (ICORP), Computational Brain. He has also been designated as a Project Leader/Research Expert for National Institute of Information and Communications Technology (NICT) of Japan. He is also involved (as an adviser and as an associated partner) in a number of major European Union Projects.

Over the past ten years Gordon Cheng has been the co-inventor of approximately 20 patents and is the author of approximately 250 technical publications, proceedings, editorials and book chapters.